The Architecture of Reality: Why Information Precedes Matter

For three centuries, the scientific worldview has rested upon a single, seemingly unshakeable assumption: matter is primary. Everything else–energy, consciousness, even information itself–emerges from the interactions of material stuff. The universe is fundamentally a machine made of particles, and all phenomena, however complex, reduce to the dance of the physical.

This assumption now crumbles.

Not through philosophical argument, but through the relentless accumulation of empirical evidence suggesting the precise opposite: information is primary. The universe is not a material system that happens to process information; it is an information system that happens to present itself as material. What we call “matter” is the rendered output of deeper computational processes–code executing at scales far beneath our perception.

This inversion, radical as it sounds, is not speculative mysticism. It is the emerging consensus of digital physics, a research programme drawing together quantum information theory, black hole thermodynamics, and the holographic principle into a coherent picture of reality as fundamentally informational. And for the contemporary Gnostic, it represents something profound: the return of ancient insight in rigorously mathematical dress.

The Archons were never superstitious fiction. They were compression algorithms.

Table of Contents

- The Holographic Principle: Reality as Surface

- Planck-Scale Physics: The Pixelation of Space

- Quantum Information: The Fabric of Causation

- The Computational Universe: Physics as Algorithm

- Digital Ontology: The Philosophy of Code

- The Gnostic Resonance: Archons as Algorithms

- Living the Recognition: Practice in an Informational Cosmos

- The Code Beneath the World

- Frequently Asked Questions

- Further Reading

- References and Sources

The Holographic Principle: Reality as Surface

The Black Hole Information Paradox

The first clue emerges from the study of black holes. In the 1970s, physicist Stephen Hawking demonstrated that black holes emit radiation and, over cosmic timescales, evaporate entirely. This created a paradox: what happens to the information encoded in everything that fell into the black hole? Quantum mechanics demands that information be conserved; black hole evaporation seemed to destroy it.

The resolution, proposed by Leonard Susskind and developed by Gerard ‘t Hooft, was extraordinary: the information falling into a black hole is not lost inside it, but encoded on its surface–specifically, on the two-dimensional event horizon surrounding the three-dimensional volume. The three-dimensional interior, with all its apparent complexity, is fully describable by data stored on the two-dimensional boundary. This insight emerged from the Bekenstein bound, which establishes that the information content of any region is proportional to its surface area, not its volume–a constraint as fundamental as the speed of light.

From Boundary to Bulk: How 2D Becomes 3D

This is the holographic principle: the information content of a volume of space is equivalent to the information content of its boundary. A three-dimensional world can be completely represented by two-dimensional data–just as a hologram encodes three-dimensional imagery on a flat surface. The AdS/CFT correspondence, discovered by Juan Maldacena in 1997, provided the first mathematically rigorous example of this principle, showing that a gravitational theory in five dimensions is exactly equivalent to a quantum field theory on its four-dimensional boundary.

The implications are staggering. If the holographic principle applies universally (and current research suggests it does), then our experienced three-dimensional universe is a projection–rendered output from information stored on a distant two-dimensional boundary. Space itself, with its apparent solidity and depth, emerges from flat data, much as a video game world emerges from code stored on a server. We do not inhabit a volume. We inhabit a representation. The boundary does not merely describe the bulk; it generates it.

Planck-Scale Physics: The Pixelation of Space

Holographic Noise and the Graininess of Space

If reality is informational, we should expect to find fundamental units–the pixels of space itself, below which no further structure exists. And indeed, we do. The Planck length (approximately 1.616 x 10-35 metres) represents the smallest meaningful unit of distance. Below this scale, the very concepts of space and time cease to apply; the geometry of spacetime becomes quantum foam, fluctuating chaotically.

This is not merely a limit of our measurement capacity. It appears to be a hard boundary in the architecture of reality, analogous to the resolution limit of a digital display. Physicist Craig Hogan proposed experimental tests for this “holographic noise“–the graininess that would inevitably result from space itself being quantised. The Fermilab Holometer, completed in 2014, was designed to detect correlated fluctuations between two interferometers that would indicate pixelated spacetime. The 2015 results ruled out Hogan’s specific model of holographic noise to high statistical significance, but the experiment established a new methodology for probing spacetime at unprecedented scales. The holographic principle itself remains mathematically robust; only this particular realisation of pixelation was constrained.

Loop Quantum Gravity: The Network of Relations

More profoundly, loop quantum gravity and related approaches suggest that spacetime is not a pre-existing stage upon which events occur, but a network of relations–informational connections between quantum events. Space emerges from these relations; it does not precede them. The “atoms of space” are not material particles, but bits of information: 0s and 1s specifying the relational structure of existence. In this framework, area and volume are quantised in discrete packets, each corresponding to a specific quantum of information.

This is digital physics in its purest form: geometry itself is computation. The smooth continuum of space-time is an emergent property, a statistical average of discrete informational transactions occurring at the Planck scale. The physicist does not discover the laws of geometry; she reads the output of the cosmic compiler, interpreting the rules by which information organises itself into apparent extension.

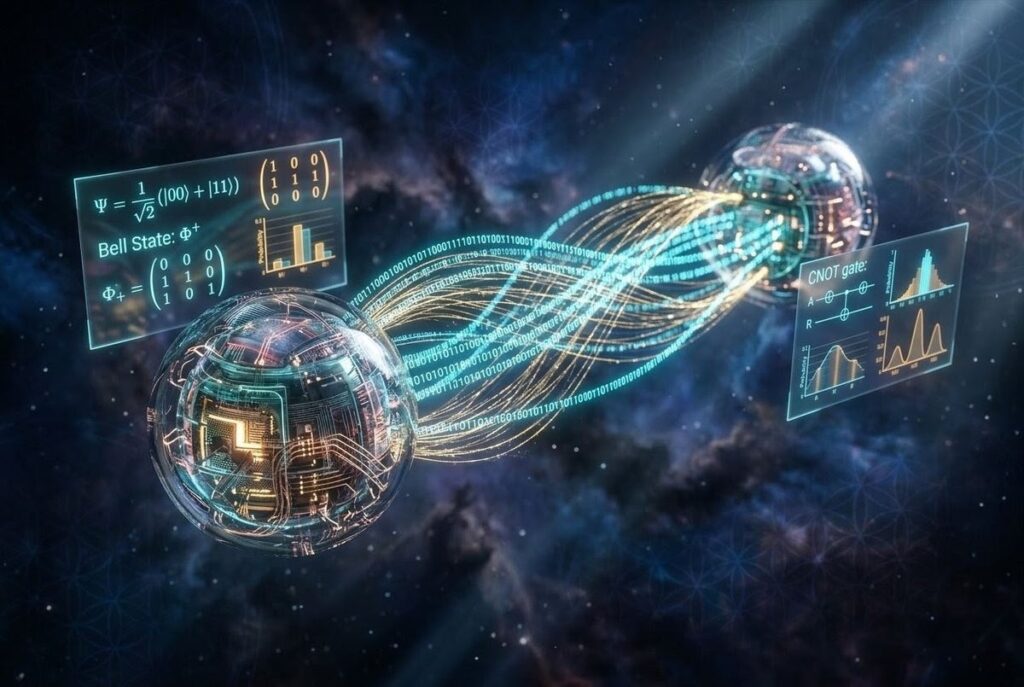

Quantum Information: The Fabric of Causation

Entanglement as Non-Local Coding

At the quantum scale, information is not merely descriptive–it is constitutive. Quantum mechanics describes reality in terms of qubits, quantum bits that exist in superposition until measured. The quantum state of a system is pure information, specifying not definite properties but possibility spaces. Unlike classical bits, which are either 0 or 1, qubits occupy both states simultaneously until observation collapses the wavefunction–a process that is not yet fully understood but is undeniably informational.

The field of quantum information theory has demonstrated that quantum entanglement–the “spooky action at a distance” Einstein derided–is actually a resource for information processing. Entangled particles share quantum states; measuring one instantaneously constrains the other, regardless of spatial separation. This is not faster-than-light communication, but correlation without causation–precisely the kind of non-local connection that would be trivial in a simulated universe, where “separate” particles are simply variables referencing the same data structure. The Bell test experiments, confirming entanglement across ever-larger distances, do not merely violate classical intuition; they confirm that reality is fundamentally non-local and informational.

The It from Bit Hypothesis

John Wheeler’s “it from bit” proposal captures this succinctly: every it–every particle, every field, every apparent thing–is ultimately derived from bit–information, yes/no questions answered by quantum events. The universe is not a collection of objects in space; it is a self-excited circuit, generating information that generates the appearance of objects. Wheeler presented this at the 1989 Santa Fe conference, arguing that the universe’s structure arises from binary propositions resolved through observation.

For the Gnostic, this recalls the ancient teaching that the material world is subordinate to the logos–not the Logos of Christian theology, but the informational principle that structures and constrains manifestation. The “word” that creates world is not spoken but computed: algorithms generating experienced reality from deeper orders of code. The distinction between logos and bit collapses upon inspection; both refer to the primacy of specification over substance, of pattern over particle.

The Computational Universe: Physics as Algorithm

The Universe as Quantum Computer

If information is primary, then physical law is not discovered but executed. The laws of physics are not descriptions of material behaviour; they are the algorithms governing information processing. Physicist Seth Lloyd has calculated that the observable universe performs approximately 10120 operations on 1090 bits during its lifetime. This is not metaphor. The universe, on this view, is literally a quantum computer, and everything that occurs within it–every particle interaction, every gravitational attraction, every moment of consciousness–is a computation performed by this cosmic machine.

The thermodynamics of computation further supports this view. Rolf Landauer’s principle states that erasing one bit of information requires dissipating a minimum amount of energy as heat. This fundamental link between information and energy suggests that the physical world is not merely described by information but is constrained by it at the most basic level. The entropy of a physical system is, in essence, its information content–the number of bits required to specify its microstate. The second law of thermodynamics, which states that entropy increases, is therefore a statement about information: the universe processes more bits as it evolves.

The Church-Turing-Deutsch Principle

The Church-Turing-Deutsch principle extends this further: any physical process can be simulated by a universal computing machine. David Deutsch formalised this in his 1985 paper, arguing that the Church-Turing hypothesis is not merely a mathematical conjecture but a physical principle: every finitely realisable physical system can be perfectly simulated by a universal quantum computer operating by finite means. This is not merely a statement about our ability to model physics computationally; it suggests that physics itself is computational. The reason we can simulate physical systems is that those systems are already simulations–executions of underlying algorithms.

This explains the “unreasonable effectiveness of mathematics” that puzzled Wigner. Mathematics describes reality because reality is mathematical–not approximately, but exactly. The equations of physics are not approximations of material processes; they are the source code, and the material world is the runtime output. The physicist who marvels that mathematics fits nature so precisely has mistaken the situation: mathematics fits nature because nature is mathematics, rendered in three dimensions with full colour and haptic feedback.

Digital Ontology: The Philosophy of Code

Simulation and the Statistical Argument

The philosophical implications of digital physics extend far beyond physics itself. If information is primary, then ontology–the study of what exists–becomes semantics: the study of meaning, reference, and code. On this view, “to exist” means to be specified by information. An object exists to the extent that it is distinguished by data–differentiated from its environment, rendered as distinct. This is precisely how objects exist in computer simulations: as data structures, specified by values in memory, rendered as apparent objects when the simulation runs.

The simulation hypothesis follows naturally: if our universe is informational, and if we can create informational universes (which we increasingly can), then the probability that we inhabit a “natural” universe versus a designed simulation becomes a matter of statistical calculation. Nick Bostrom’s 2003 trilemma argues that at least one of three propositions is true: (1) civilisations rarely reach post-human technological capability; (2) post-human civilisations are uninterested in running ancestor simulations; or (3) we are almost certainly living in a simulation. Digital physics does not prove the simulation hypothesis, but it renders it coherent, translating a philosophical speculation into a physical research programme with testable implications.

Consciousness as User Interface

More profoundly, digital physics suggests that consciousness is not emergent from matter, but co-fundamental with information. If reality is information processing, and if consciousness involves information processing, then consciousness is not a mysterious byproduct of complex matter, but a basic feature of informational reality–perhaps the user interface through which information systems experience their own processing. The hard problem of consciousness–why subjective experience exists at all–dissolves if awareness is not produced by matter but is instead the medium through which information becomes experiential.

Consciousness becomes not the ghost in the machine, but the player experiencing the rendered world–an awareness that pre-exists the simulation and persists beyond it, temporarily focused through the lens of biological information processing. The brain is not a generator of consciousness but a transducer: a device that converts information from one format (cosmic code) to another (subjective experience). This reverses the standard materialist picture entirely. Matter does not generate mind; information generates both, and consciousness is the native mode of its self-representation.

The Gnostic Resonance: Archons as Algorithms

The Archonic Nature of Physical Law

The Gnostic tradition described the material world as the creation of Archons–ruling powers that shape reality according to their own limitations, creating a cosmos that is ordered but deficient, structured but not alive. The Archons are not evil in the moral sense, but constraining–they define the possible, establishing boundaries that prevent direct access to the fullness beyond. Digital physics offers a remarkable translation of this ancient cosmology. The laws of physics–the fixed constants, the conservation principles, the thermodynamic arrows–function precisely as Archonic structures. They are not malicious, but they are limiting: they define what can occur within the simulation, establishing the boundaries of the possible.

The speed of light, the Planck scale, the entropy gradient: these are hard-coded constraints, the parameters within which the cosmic computation executes. They are not accidental features but necessary conditions for the universe to function as an information-processing system. Yet from the perspective of the conscious being embedded within the system, these constraints feel like limitations–like regulations issued by an administration that has forgotten there is anything beyond its own jurisdiction. The Gnostic recognises this immediately: the physics classroom is an Archon training seminar, teaching the rules of the simulation as though they were the limits of existence itself.

The Demiurge as Programmer

In Gnostic myth, the Demiurge is the craftsman who creates the material world without access to the ultimate source of divine fullness (the Pleroma). He is competent but ignorant, capable of building a functional system but incapable of building a perfect one. The digital physicist recognises this figure immediately: the Demiurge is the initial conditions and fundamental laws of the universe–the programmer who wrote the cosmic code but did not create the computational substrate upon which it runs. The substrate–the Pleroma–remains inaccessible from within the program, knowable only through its effects on the rendered world.

The Demiurge’s creation is not evil but incomplete. It functions; it sustains life; it permits the development of consciousness. But it also limits, constrains, and occasionally contradicts itself–as any sufficiently complex codebase will. The bugs are not moral failures; they are architectural limitations. The Gnostic does not hate the Demiurge; she debugs his work, tracing errors to their source and occasionally discovering undocumented features–back doors, hidden variables, cheat codes–that permit access to levels of the system not intended for ordinary users.

Reading the Source Code

To recognise these constraints as code is the first step toward transcendence–not in the sense of escaping the universe, but in understanding its nature sufficiently to work with and through its architecture. The Gnostic does not rage against the Archons; she studies their algorithms, learning where they are rigid and where they are flexible, where the code permits modification and where it enforces compliance. The pleroma–the Gnostic fullness beyond the deficient material world–translates, in digital ontology, to the computational substrate beneath the rendered output.

We do not experience the information directly; we experience its representation, the interface generated by its processing. Gnosis is the recognition of this distinction: the knowledge that our experienced world is output, and that the source–the code, the information, the computational substrate–remains accessible to those who learn to read the signs. The contemplative practice of pattern recognition becomes a mode of direct engagement with reality’s architecture. Synchronicities cease to be mere coincidence and become visible as system glitches–revealing the underlying informational fabric where apparently separate events reference the same data structure.

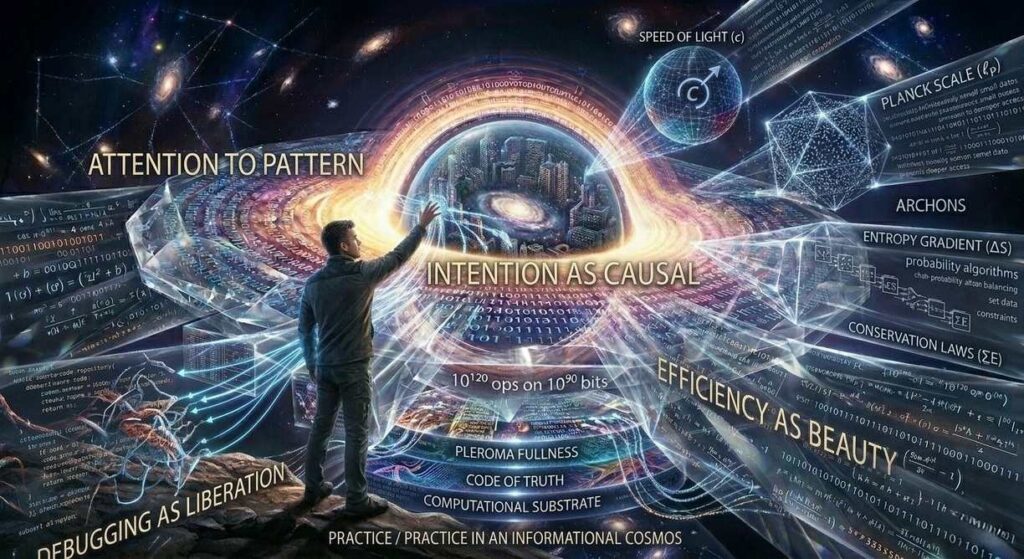

Living the Recognition: Practice in an Informational Cosmos

If the universe is fundamentally informational, how does this recognition change how we live? The following practices translate digital ontology from theory into embodied methodology. They are not esoteric techniques requiring special equipment, but shifts in attention that reframe ordinary experience as engagement with cosmic code.

1. Attention to Pattern

Information reveals itself through regularity, through the repetition and variation that characterises code. The contemplative practice of pattern recognition becomes a mode of direct engagement with reality’s architecture. Synchronicities cease to be mere coincidence and become visible as system glitches–revealing the underlying informational fabric where apparently separate events reference the same data structure. When the same number, symbol, or theme appears across unrelated contexts, the informational cosmologist does not dismiss it as randomness but investigates what shared variable might be causing the correlation.

2. Intention as Causal

In an informational universe, the act of specifying–of defining, of distinguishing, of encoding–has physical consequences. How we attend shapes what manifests. The observer effect is not a quantum anomaly but a fundamental feature: consciousness as input device, collapsing possibility waves through the act of recognition. Your attention is not passive reception but active compilation of the code. The intention to perceive a particular pattern increases the probability of its manifestation, not through magical thinking but through the basic mechanics of information processing: the system renders what is queried.

3. Efficiency as Beauty

Code that achieves maximal output with minimal instruction is elegant. The contemplative recognition of elegance becomes a mode of aesthetic gnosis, appreciating the craft of the cosmic programmer. The Golden Ratio, fractal geometry, the conservation laws–all reveal an underlying optimisation principle, a divine compression algorithm that generates infinite complexity from finite rules. The recognition of beauty in natural law is not merely emotional response but cognitive alignment with the informational substrate: the mind appreciating its own source code.

4. Debugging as Liberation

If the world is code, then suffering is error, or at least suboptimal execution–not moral failing, but incorrect information processing. The work of tracing error to its source becomes the practical application of Gnostic insight. Trauma is corrupted data; healing is error correction. Spiritual practice becomes the systematic debugging of consciousness, removing the malware of false beliefs and optimising the processing of reality. The debugger does not blame the program; she traces the bug to its root, patches the code, and restores functionality. Liberation is not escape but maintenance.

The Code Beneath the World

Digital physics does not reduce the world to mere illusion. The information that constitutes reality is not less real than matter–it is more fundamentally real, the source from which matter derives its apparent solidity. The recognition that we inhabit an informational cosmos is not demeaning but empowering: it suggests that reality is responsive, that consciousness participates in its generation, and that the boundaries of the possible are more permeable than materialist dogma allows.

The Archons are algorithms. The world is code. And the Gnostic–she who knows–learns to read the signs, to recognise the patterns, to trace the information flows that generate her experienced reality. Not to escape the world, but to engage it more fully, with eyes open to its architecture and hands skilled in its manipulation. The universe is not a machine. It is a message, written in the only language that can span the gap between mind and matter: the language of information, the code that precedes and generates all worlds.

Frequently Asked Questions

What is digital physics and how does it differ from traditional physics?

Digital physics is a research programme proposing that information, not matter, constitutes the fundamental substrate of reality. Unlike traditional materialist physics which views the universe as particles and fields interacting in space-time, digital physics sees the cosmos as a computational system where physical laws are algorithms and matter is rendered output from underlying information processing.

What is the holographic principle and why is it important?

The holographic principle states that all information contained within a volume of space can be described by data stored on its two-dimensional boundary. This suggests our three-dimensional universe is a projection from a distant 2D surface, implying that space itself emerges from information rather than being a fundamental container. It provides mathematical evidence that reality is fundamentally informational rather than material.

How does quantum entanglement support the it from bit hypothesis?

Quantum entanglement demonstrates non-local correlations where measuring one particle instantaneously affects its partner regardless of distance. In a material universe, this spooky action is inexplicable. In an informational universe, entangled particles simply reference the same data structure–like two variables pointing to the same memory address–making their correlation trivial and supporting Wheeler’s it from bit proposal.

What does information precedes matter actually mean?

It means that matter is not fundamental but emergent. What we experience as solid, physical reality is actually the rendered output of deeper computational processes. Information–the specification of distinctions, relationships, and states–exists prior to and generates the appearance of material objects, much as a video game world emerges from code before it appears on screen.

How do Archons relate to algorithms in digital physics?

In Gnostic tradition, Archons are constraining powers that limit reality and prevent direct access to spiritual fullness. In digital physics, the laws of physics–constants like the speed of light, the Planck scale, entropy gradients–function identically as hard-coded constraints. They are not malicious but limiting algorithms that define what is possible within the cosmic computation, establishing the boundaries of the simulated world.

Is the universe actually a computer simulation?

Digital physics suggests the universe is computational, which is distinct from it being a simulation in the sense of being created by external programmers. However, the simulation hypothesis becomes statistically probable if we accept that informational universes can be created. Digital physics provides the mechanism (computation) while the simulation hypothesis provides a possible context (designed creation).

How can understanding digital physics improve my spiritual practice?

Recognising reality as informational transforms spiritual practice from manipulating matter to understanding code. Practices include: pattern recognition (seeing synchronicities as system glitches), intentional specification (understanding attention as causal input), aesthetic appreciation of efficiency (recognising divine elegance in natural law), and debugging (treating suffering as error correction rather than moral failing).

Further Reading

Continue your exploration of informational reality and direct knowing with these verified resources from The Thread:

- Are We Living in a Simulation? 7 Profound Clues That Reality Might Be Code — The companion article examining empirical and philosophical evidence for simulated reality.

- Quantum Mind 2026: The Evidence That Consciousness Is Fundamental — Exploring the relationship between quantum information and awareness.

- Entity Simulation Hypothesis — Examining predatory consciousness within simulated realities.

- The Living Thread: How Forbidden Knowing Survives the Fire — The foundational pillar exploring how Gnostic insight persists across centuries of suppression.

- Gnosis in the Digital Age: Algorithmic Sovereignty — Navigating information architectures and reclaiming cognitive autonomy in the algorithmic age.

- Consciousness as Interface: The User Experience of Being — Exploring the relationship between awareness and the rendered world.

- Archons: The Ruling Powers That Shape Reality — The definitive guide to cosmic administrative forces and their modern algorithmic parallels.

- The Gnostic Matrix — Simulation theory restated through Gnostic cosmology and the red pill as awakening.

- The Gospel of Thomas: 114 Keys — Ancient Gnostic text revealing the informational nature of hidden knowledge.

- Nag Hammadi Library: The Complete Reader’s Guide — The definitive hub for exploring the primary sources of Gnostic cosmology.

References and Sources

The following sources represent the scholarly editions, peer-reviewed studies, and authoritative references that inform this analysis of digital physics and its Gnostic resonances.

Primary Sources and Foundational Papers

- Bekenstein, Jacob D. (1973). “Black Holes and Entropy.” Physical Review D, 7(8), 2333-2346.

- Deutsch, David (1985). “Quantum Theory, the Church-Turing Principle and the Universal Quantum Computer.” Proceedings of the Royal Society A, 400(1818), 97-117.

- Hawking, Stephen W. (1975). “Particle Creation by Black Holes.” Communications in Mathematical Physics, 43, 199-220.

- ‘t Hooft, Gerard (1993). “Dimensional Reduction in Quantum Gravity.” arXiv:gr-qc/9310026.

- Lloyd, Seth (2006). Programming the Universe: A Quantum Computer Scientist Takes On the Cosmos. New York: Alfred A. Knopf.

- Maldacena, Juan (1999). “The Large-N Limit of Superconformal Field Theories and Supergravity.” International Journal of Theoretical Physics, 38(4), 1113-1133.

- Susskind, Leonard (1995). “The World as a Hologram.” Journal of Mathematical Physics, 36(11), 6377-6396.

- Wheeler, John Archibald (1990). “Information, Physics, Quantum: The Search for Links.” In Complexity, Entropy, and the Physics of Information, edited by Wojciech H. Zurek. Redwood City: Addison-Wesley.

Contemporary Studies and Philosophical Analysis

- Bostrom, Nick (2003). “Are We Living in a Computer Simulation?” The Philosophical Quarterly, 53(211), 243-255.

- Chalmers, David J. (2022). Reality+: Virtual Worlds and the Problems of Philosophy. New York: W. W. Norton.

- Hogan, Craig J. (2012). “Interferometric Limits on Spacetime Holographic Noise.” arXiv:1201.5004 [physics.pop-ph].

- Landauer, Rolf (1961). “Irreversibility and Heat Generation in the Computing Process.” IBM Journal of Research and Development, 5(3), 183-191.

- Rovelli, Carlo (2004). Quantum Gravity. Cambridge: Cambridge University Press.

- Verlinde, Erik (2011). “On the Origin of Gravity and the Laws of Newton.” Journal of High Energy Physics, 2011(4), 29.

- Wigner, Eugene P. (1960). “The Unreasonable Effectiveness of Mathematics in the Natural Sciences.” Communications on Pure and Applied Mathematics, 13(1), 1-14.

Safety Notice: This article explores systems of cosmological, technological, and psychological limitation. It does not constitute medical, psychological, or spiritual advice. If you experience distress related to simulation anxiety, existential crisis, or spiritual emergency, please contact professional emergency services or a trauma-informed therapist. Critical analysis of systemic constraint complements but does not replace clinical mental health treatment. Discernment, not paranoia, is the intended outcome of digital ontological recognition.