Quantum Utility and the Glitch in the Matrix

For decades, quantum computing has lingered in the same purgatory as fusion power and Mars colonies: perpetually ten years away. But in 2025, the dam broke. Not with the flashy announcement of a million-qubit machine that functions merely as an expensive room heater, but with something far more subversive: quantum utility–the moment when these notoriously glitch-ridden machines finally outperform classical supercomputers at commercially relevant tasks. Welcome to the post-NISQ era, where the glitches themselves become the gateway.

Table of Contents

- The NISQ Era: Glitches as Archonic Interference

- Below-Threshold Breakthroughs: The Fault Tolerance Revolution

- Quantum Utility: Practical Advantage Beyond the Hype

- The Quantum Glitch as Physical Metaphor

- The Roadmap to 2029: Starling and the Post-Archonic Computer

- Frequently Asked Questions

- Further Reading

- References and Sources

The NISQ Era: Glitches as Archonic Interference

The NISQ era–Noisy Intermediate-Scale Quantum–sounds technical, but it describes something almost Gnostic: a liminal period where quantum computers possessed sufficient qubits to theoretically surpass classical machines, yet remained too error-prone to achieve reliable advantage. Like the hylic realm of Gnostic cosmology, NISQ machines existed in a state of dense materiality, their quantum states decohering into classical noise almost as quickly as they could be initialised. They were, in essence, archonic computers: systems that promised transcendence while remaining trapped in physical limitation.

The statistics are humbling. As of 2025, quantum computers make errors every 100 to 1,000 operations–one hundred billion billion times more frequently than classical computers. Alice & Bob CEO Theau Peronnin describes pre-error-correction quantum machines bluntly: “basically noise generating machines.” The quantum glitch–decoherence, bit-flip errors, phase errors–represented not merely technical failure but the physical universe’s stubborn resistance to binary categorisation.

Yet these glitches were never mere noise. Quantum error correction research exploded in 2025 with 120 peer-reviewed papers published in the first ten months alone, up from 36 in 2024. The field shifted from theoretical speculation to engineering reality, recognising that error is not an obstacle to quantum computing but its fundamental substrate–the very feature that, when properly harnessed through quantum error correction codes, enables fault-tolerant operation.

Below-Threshold Breakthroughs: The Fault Tolerance Revolution

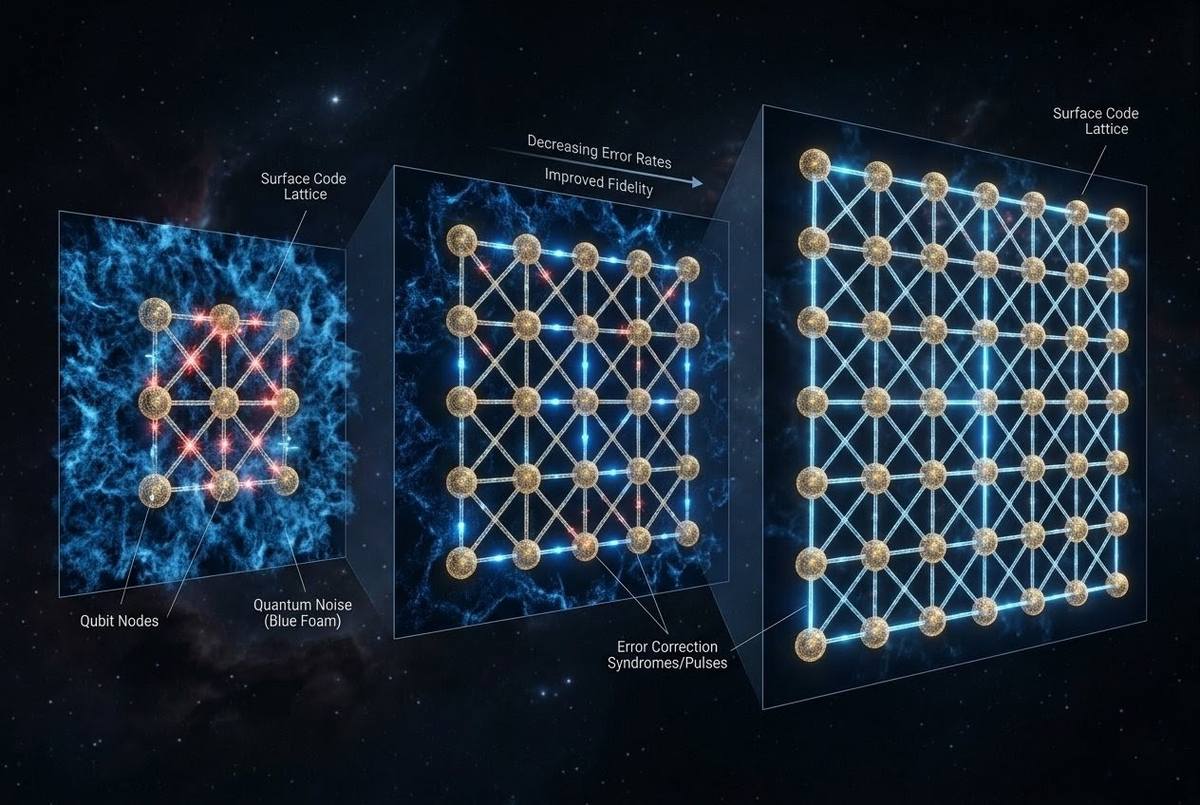

The watershed moment arrived in December 2024 with Google’s Willow chip, but its full implications resonated throughout 2025. Willow demonstrated “below-threshold” error correction: the counter-intuitive phenomenon where increasing the number of physical qubits actually decreases logical error rates. By scaling surface code distance from 3×3 to 5×5 to 7×7 qubit arrays, Google achieved exponential error reduction–proving that fault-tolerant quantum computing is not merely possible but scalable.

This is the quantum equivalent of passing through the archonic veil. Below-threshold operation means the error correction circuitry suppresses errors faster than they accumulate–the system becomes self-healing, its logical qubits growing more reliable as the physical substrate expands. The glitch becomes the cure; the noise becomes the signal. In December 2025, QuEra Computing declared 2025 “the year fault tolerance moved from theoretical promise to engineering reality,” having demonstrated continuous operation, scalable error correction, and magic state distillation using 448 atomic qubits in collaboration with Harvard and MIT.

The Hour-Long Qubit and the End of Decoherence

While Google pursued error correction through redundancy, Paris-based Alice & Bob attacked the problem at its source: qubit longevity. In September 2025, they announced qubits that resist bit-flip errors for more than one hour–four times longer than their previous record and millions of times longer than typical qubits, which often decohere within microseconds. IBM’s Eagle processor achieves 400 microseconds of coherence; other systems manage 1 to 34 milliseconds. Alice & Bob’s cat qubits achieve 3,600 seconds.

This is not incremental improvement; it is a phase transition. By virtually eliminating bit-flip errors–one of the two primary error types in quantum systems–Alice & Bob demonstrated that hardware improvements can reduce error correction overhead by orders of magnitude. The company projects fault-tolerant quantum computers with 100 logical qubits by 2030, bypassing the million-physical-qubit requirement that burdens competing architectures. The archonic grip of decoherence loosens; the quantum state maintains its integrity not through bureaucratic error-correction committees but through inherent physical stability.

Quantum Utility: Practical Advantage Beyond the Hype

Error correction milestones matter only if they enable practical utility. In September 2025, HSBC demonstrated quantum utility in the wild: algorithmic bond trading using hybrid classical-quantum computing across multiple IBM quantum computers. Processing real production-scale European corporate bond data, HSBC achieved up to 34% improvement in predicting trade fill likelihood at quoted prices compared to standard classical techniques. This is not a contrived benchmark; this is financial infrastructure recognising quantum advantage in real economic decisions.

Similarly, IonQ and Ansys conducted medical device simulations on IonQ’s Forte system that outperformed classical high-performance computing by 12 percent–one of the first documented cases of practical quantum advantage in pharmaceutical engineering. The hybrid workflow for blood pump design handled up to 2.6 million vertices and 40 million edges, demonstrating that quantum-accelerated simulation can deliver real-world impact today. SpinQ applied quantum computing to genome assembly challenges with BGI Genomics, converting complex gene sequencing into combinatorial optimisation tasks solvable through quantum superposition. The quantum utility era has arrived not with a bang but with a 12-34% improvement in specific, high-value computational domains.

Algorithmic Fault Tolerance: The 100x Efficiency Revolution

Beyond hardware advances, 2025 witnessed breakthrough algorithmic efficiencies. QuEra’s collaboration with Harvard and Yale introduced Algorithmic Fault Tolerance (AFT), a framework reducing quantum error correction overhead by 10 to 100 times. Traditional fault-tolerant approaches treat each logical gate as requiring full syndrome extraction and correction; AFT employs transversal operations and correlated decoding across entire algorithmic windows, cutting runtime overhead by factors roughly equal to code distance.

The implications are staggering. Earlier estimates suggested breaking RSA-2048 encryption would require 20 million qubits; recent research demonstrates that combining smarter software, efficient error correction, and higher-quality qubits reduces this requirement to one million qubits–a twenty-fold reduction that accelerates practical quantum utility timelines by years. Iceberg Quantum’s Pinnacle architecture, announced February 2026, claims a tenfold reduction in physical qubits required for RSA-2048, potentially enabling cryptographically relevant quantum computation this decade rather than next. It is important to note that the Pinnacle paper remains a preprint and has not yet undergone full peer review; the engineering trade-offs involved remain subjects of active debate within the research community.

The Quantum Glitch as Physical Metaphor

There is a metaphysical dimension to quantum error that transcends engineering. Classical computers operate deterministically: a bit is 0 or 1, and errors represent unambiguous hardware failure. Quantum glitches–decoherence, superposition collapse, entanglement disruption–are ontologically different. They represent the universe’s refusal to be pinned down, the insistence that reality at its fundament remains probabilistic, relational, and context-dependent.

The quantum glitch is the physical manifestation of the Gnostic “fall”–the decoherence that occurs when quantum possibilities collapse into classical actualities. Error correction, then, becomes a technology of redemption: the reconstruction of quantum coherence through sophisticated encoding schemes that distribute information across entangled networks, rendering the whole more stable than its parts. The surface code, the cat qubit, the topological qubit–these are not merely engineering solutions but ontological strategies for maintaining quantum superposition against the entropic pull of classical reality.

When Reality Decoheres: Error as Ontological Feature

In this light, quantum error correction research becomes the most ambitious project in metaphysical engineering: the construction of computational systems that operate in the liminal space between possibility and actuality, maintaining coherent superposition long enough to perform calculations impossible within classical logic. The 2025 breakthroughs represent not merely technical achievements but boundary crossings–the creation of machines that operate in the quantum realm long enough to glimpse solutions invisible to classical computation.

The quantum glitch reminds us that reality itself is noisy, intermediate-scale, and perpetually threatening to decohere. That we have learned to harness this noise–to ride the wave of decoherence rather than be drowned by it–represents a triumph of engineering imagination over physical limitation. The glitch is not the enemy; the glitch is the teacher.

The Roadmap to 2029: Starling and the Post-Archonic Computer

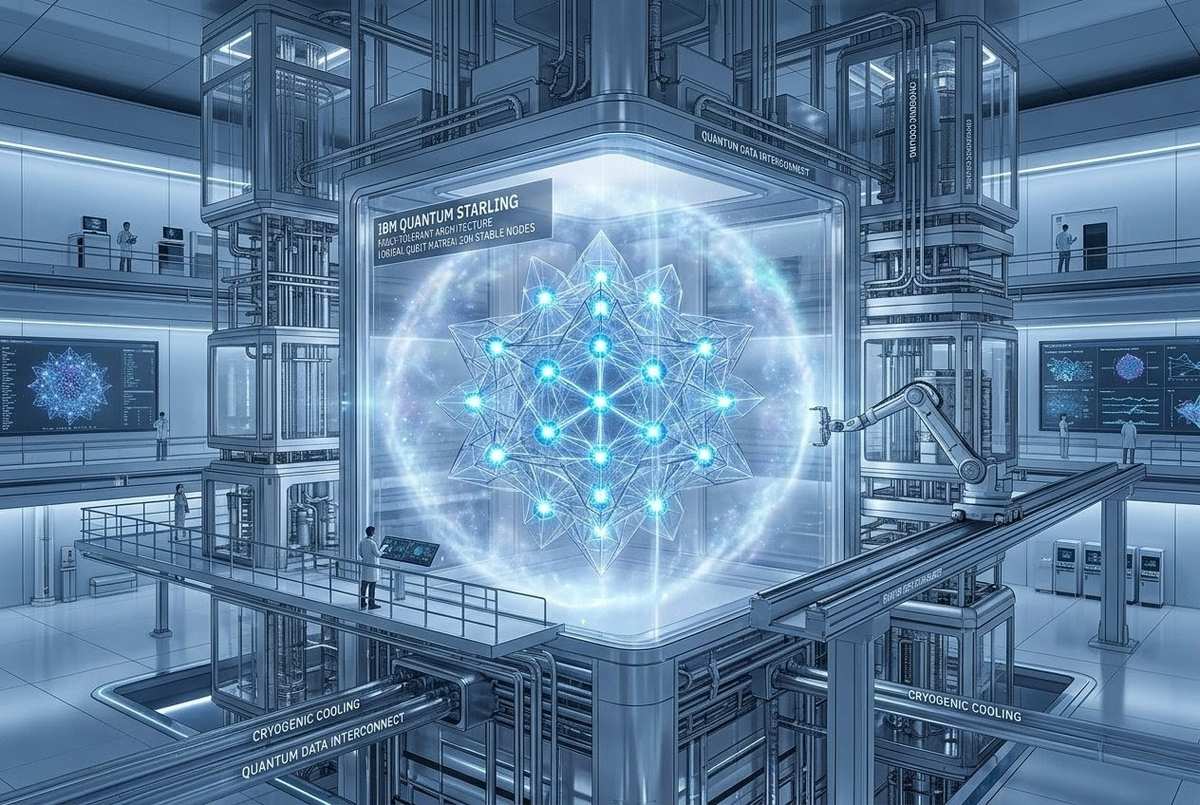

IBM’s updated roadmap, released in 2025, projects the Quantum Starling system for 2029: 200 logical qubits capable of executing 100 million error-corrected quantum operations. By 2033, IBM targets 100,000 qubits in quantum-centric supercomputers through the Blue Jay system. Microsoft announced its Majorana 1 processor in February 2025, an eight-qubit topological device that the company claims offers a path to one million qubits on a single chip. However, the Majorana 1 announcement has generated significant scientific skepticism; the accompanying Nature editorial note stated that the results “do not represent evidence for topological modes” but offer a platform for manipulating such modes in the future. The distinction between marketing optimism and peer-reviewed consensus remains sharp.

These are not vanity metrics. They represent the transition from NISQ to FASQ–Fault-tolerant Accurate Scale Quantum–the era where quantum computers cease to be experimental curiosities and become utility-scale infrastructure. The archonic trap of decoherence, which has limited quantum systems to laboratory demonstrations and contrived benchmarks, is finally releasing its grip.

The quantum utility era promises not merely faster computation but different computation: the solution of problems structurally intractable to classical approaches. From molecular simulation for drug discovery to energy grid optimisation, from materials science to cryptographic breaking, the quantum computer represents a breach in the classical computational envelope. The glitches that plagued early quantum systems were the birth pangs of a new computational ontology–one that mirrors the uncertainty, superposition, and entanglement of physical reality itself.

We stand at the threshold. The quantum utility demonstrated in 2025–the 34% improvement in bond trading, the 12% advantage in medical simulation, the below-threshold error correction–are the first photons of dawn. The fault-tolerant quantum computer is no longer a theoretical possibility but an engineering project with defined milestones, committed capital (over $230 million to QuEra alone in 2025), and demonstrated sub-components. The glitch has been harnessed. The matrix is opening.

Frequently Asked Questions

What is quantum utility?

Quantum utility is the demonstrated ability of quantum computers to outperform classical supercomputers at commercially relevant, practical tasks rather than contrived benchmarks. HSBC’s 2025 bond trading demonstration showed 34% improvement in trade prediction accuracy using IBM’s Heron processor, marking the transition from experimental to utility-scale quantum computing.

What is below-threshold error correction?

Below-threshold error correction occurs when adding more physical qubits to a quantum error correction code actually decreases logical error rates rather than increasing them. Google’s Willow chip demonstrated this in December 2024, proving that fault-tolerant quantum computing is scalable. Each time the code distance increased from 3 to 5 to 7, the logical error rate halved.

What is the NISQ era?

NISQ (Noisy Intermediate-Scale Quantum) refers to the era of quantum computers with 50-1,000 qubits that are too error-prone for fault-tolerant operation but capable of demonstrating quantum phenomena. The NISQ era is ending as 2025 breakthroughs achieve below-threshold error correction and practical quantum utility across finance, medicine, and genomics.

How long do quantum qubits normally last?

Typical qubits decohere (lose quantum properties) within microseconds to milliseconds. IBM’s Eagle processor achieves 400 microseconds; many systems last 1-34 milliseconds. Alice & Bob’s breakthrough achieved 1+ hour resistance to bit-flip errors–millions of times longer than typical qubits–using their Galvanic Cat superconducting design.

What is the difference between a physical qubit and a logical qubit?

Physical qubits are the actual quantum hardware (atoms, superconducting circuits, etc.) that are noisy and error-prone. Logical qubits are error-corrected qubits created by encoding quantum information across multiple physical qubits using quantum error correction codes. IBM’s Starling targets 200 logical qubits by 2029; Blue Jay aims for 2,000 logical qubits beyond 2033.

Can quantum computers break encryption now?

Not yet. Current estimates suggest breaking RSA-2048 requires approximately 1 million logical qubits, reduced from earlier estimates of 20 million due to 2025 algorithmic improvements. Iceberg Quantum’s Pinnacle architecture (February 2026 preprint) claims fewer than 100,000 physical qubits could suffice, though this remains unverified by peer review. IBM’s Starling (2029) targets 200 logical qubits; cryptographically relevant quantum computers may arrive this decade but are not yet available.

What is Algorithmic Fault Tolerance (AFT)?

AFT is a 2025 breakthrough by QuEra, Harvard, and Yale published in Nature that reduces quantum error correction overhead by 10-100x through transversal operations and correlated decoding across entire algorithmic windows. By cutting runtime overhead by a factor of code distance (often 30 or higher), AFT brings practical fault-tolerant quantum computing years closer to reality.

Further Reading

These links connect quantum utility and error correction to related resources within the ZenithEye library, offering context on consciousness, simulation, algorithmic sovereignty, and the metaphysics of information.

- Quantum Mind 2026: The Evidence That Consciousness Is Fundamental — Exploring the quantum foundations of consciousness and the observer effect in quantum systems.

- AI and the Archon: Algorithmic Governance and Human Autonomy — How classical computing architectures embody archonic control structures that quantum systems may transcend.

- The Digital Demiurge: AI as the New Yaldabaoth and the Quantum Escape — The companion piece examining quantum computing as potential liberation from algorithmic determinism.

- Architecture of Reality: Information Precedes Matter — The information-theoretic foundations of quantum mechanics and the primacy of code over substance.

- Simulation Hypothesis: Clues That Reality Is Code — Quantum indeterminacy as evidence for the computational nature of physical reality.

- The Entity and Simulation Hypothesis — The intersection of quantum observation, consciousness, and the nature of entities within computed realities.

- Gnosis in the Digital Age: Algorithmic Sovereignty — Maintaining autonomy within computational systems and the quantum potential for cryptographic liberation.

- The Gnostic Matrix: Reality as Information System — The metaphysical implications of quantum information theory and error correction as redemptive technology.

- Holographic Universe Theory and Consciousness — Quantum error correction codes applied to spacetime and the holographic principle.

References and Sources

The following sources support the claims and data presented in this article. Technical and industry sources are grouped separately from the metaphysical and philosophical frameworks.

Quantum Computing Industry and Technical Sources

- Google Quantum AI. (2024, December). Quantum error correction below the surface code threshold. Nature. DOI: 10.1038/s41586-024-08449-y.

- Alice & Bob. (2025, September 25). Alice & Bob Shares Preliminary Results Vastly Surpassing Previous Bit-Flip Time Record. alice-bob.com.

- HSBC & IBM. (2025, September 25). HSBC Demonstrates Quantum-Enabled Algorithmic Trading With IBM. thequantuminsider.com.

- IonQ & Ansys. (2025, March 20). IonQ and Ansys Achieve Major Quantum Computing Milestone. ionq.com.

- SpinQ Technology. (2025). SpinQ’s Role in Democratizing Quantum Technologies. spinquanta.com.

- QuEra Computing, Harvard, & Yale. (2025, September 24). Low-Overhead Transversal Fault Tolerance for Universal Quantum Computation. Nature. DOI: 10.1038/s41586-025-09543-5.

- QuEra Computing. (2025, December 9). QuEra Computing Marks Record 2025 as the Year of Fault Tolerance and Over $230M of New Capital. quera.com.

- IBM. (2025, June 10). IBM Offers Roadmap Toward Large-Scale, Fault-Tolerant Quantum Computer. ibm.com/quantum.

- IBM. (2026, April 16). IBM Lays Out Clear Path to Fault-Tolerant Quantum Computing. ibm.com/quantum/blog/large-scale-ftqc.

- Iceberg Quantum. (2026, February 13). Pinnacle and Series Seed. iceberg-quantum.com. (Preprint; not yet peer-reviewed)

- Microsoft. (2025, February 19). Microsoft Introduces Majorana 1, the World’s First Quantum Chip Powered by Topological Qubits. news.microsoft.com.

- Riverlane. (2025, December 22). Quantum Error Correction: Our 2025 Trends and 2026 Predictions. riverlane.com.

Journalism and Analysis

- Forbes. (2025, September 25). Massive Quantum Computing Breakthrough: Long-Lived Qubits. forbes.com.

- Fortune. (2025, September 25). HSBC Reports Quantum Computing Breakthrough in Bond Trading. fortune.com.

- IEEE Spectrum. (2025, June 10). IBM Tackles New Approach to Quantum Error Correction. spectrum.ieee.org.

- APS Physics. (2025, March 21). Microsoft’s Claim of a Topological Qubit Faces Tough Questions. physics.aps.org.

- PostQuantum. (2026, March 1). Pinnacle Architecture: 100,000 Qubits to Break RSA-2048, but at What Cost?. postquantum.com.

Safety Notice: This article explores the technological, philosophical, and metaphysical dimensions of quantum computing and error correction. It does not constitute technical, investment, or strategic advice. If you are making decisions about cryptographic migration, quantum readiness, or enterprise technology strategy, please consult qualified cybersecurity professionals, quantum computing specialists, and relevant standards bodies (NIST, NSA CNSA 2.0). The timelines and capabilities discussed here represent current research trajectories, not guaranteed outcomes. Quantum computing complements but does not replace classical infrastructure planning.