Digital Suppression: The Contemporary Form of the Old Pattern

The Algorithmic Inquisition: How Digital Suppression Replaced the Index of Forbidden Books

The fire has learned to hide itself. The burning of books continues, but the flames are invisible, automated, distributed across server farms and neural networks that hum with the quiet efficiency of cosmic bureaucracy. The index has become the algorithm. The inquisitor has become the content moderator working from a remote cubicle, following policy documents written by committee. The suppression of forbidden knowing–ancient in method–has evolved for the digital age with terrifying sophistication. The pattern persists; only the mechanism changes, and that change renders the suppression nearly undetectable to those who burn.

The historical pattern is clear, almost bureaucratically so. First, the identification of dangerous knowledge–material that threatens the arrangements of power, that speaks of liberation beyond the permitted channels. Second, the prohibition of that knowledge through institutional authority–church, state, or corporation acting as the archon’s representative. Third, the enforcement of prohibition through visible punishment–burning, exile, imprisonment, or social death. The visibility serves as warning. The warning maintains order through fear.

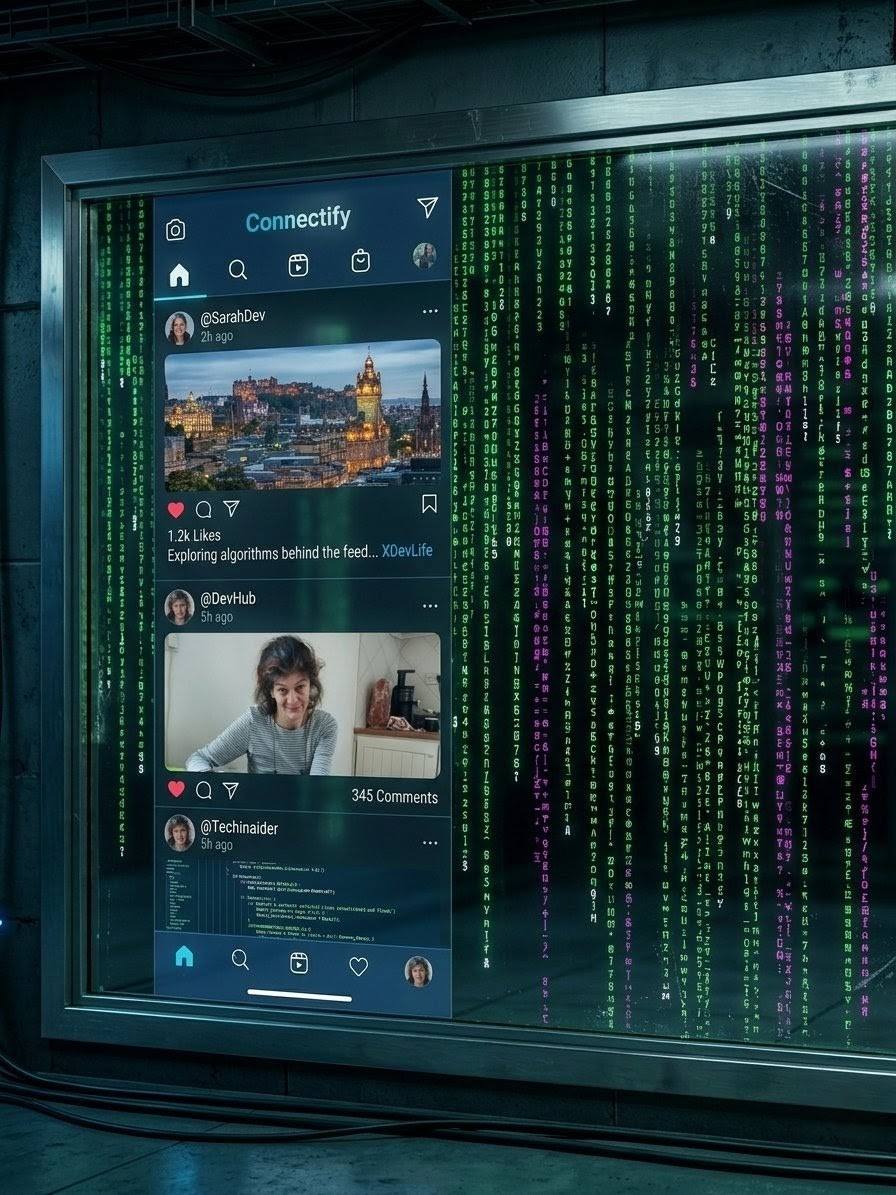

The digital pattern inverts the visibility entirely. The suppression is not announced with trumpets or smoke. It is algorithmic–the search result that does not appear, the video that cannot be monetised, the account that is shadowbanned into digital ghosthood, the recommendation that never comes. The user, unaware of what is missing, assumes the available is the complete. The fire burns without smoke, leaving no ash for evidence, only the subtle sense that something might be missing–an intuition quickly dismissed by the algorithm’s next dopamine delivery.

Table of Contents

- The Invisible Combustion

- The Algorithmic Inquisition

- The Filter Bubble as Panopticon

- The Neurochemistry of Digital Captivity

- Countermeasures and Resistance

- The Regulatory Counter-Current

- Pattern Recognition in the Digital Age

- The Thread Extended Beyond Digital Walls

- Frequently Asked Questions

- Further Reading

- References and Sources

The Invisible Combustion: How Suppression Became Distributed

No single hand holds the match in this new order. The suppression is outsourced to machine learning models trained on data labelled by contractors in distant time zones, instructed by policies written by committees with conflicting interests, enforced by systems too complex for any individual to comprehend–including those who built them. The responsibility, distributed across neural networks and corporate hierarchies, effectively disappears. The outcome–certain voices amplified to deafening volume, others silenced into static–is achieved without decision, without accountability, without the inconvenience of a signature on the execution order.

Distributed Denial of Responsibility

The platform defends itself with the precision of a well-trained bureaucrat: We do not censor; we rank according to engagement metrics. We do not ban; we demonetise to protect advertiser relationships. We do not silence; we reduce distribution to maintain platform health. The distinctions, legal and technical, obscure the functional identity. The speaker, unable to reach audience, is silenced regardless of vocabulary used to describe the deprivation. It is the archonic method perfected–control without the visible exercise of power, domination that appears as natural consequence rather than deliberate policy.

The Bureaucracy of Silence

The user collaborates unconsciously, a willing participant in their own containment. The algorithm, personalised to the granularity of individual synaptic firing patterns, delivers what the user is predicted to want based on past behaviour. The prediction, based on historical clicks and dwell time, reinforces past behaviour in a tightening spiral. The user, enclosed in filter bubbles that become ever more impermeable, encounters no friction, no challenge, no dangerous knowledge that might disrupt the comfortable patterns. The suppression is experienced as satisfaction, the narrowing of perspective as the cozy warmth of personalised content. The prison feels like a tailored suit.

The Algorithmic Inquisition: Targets and Methods

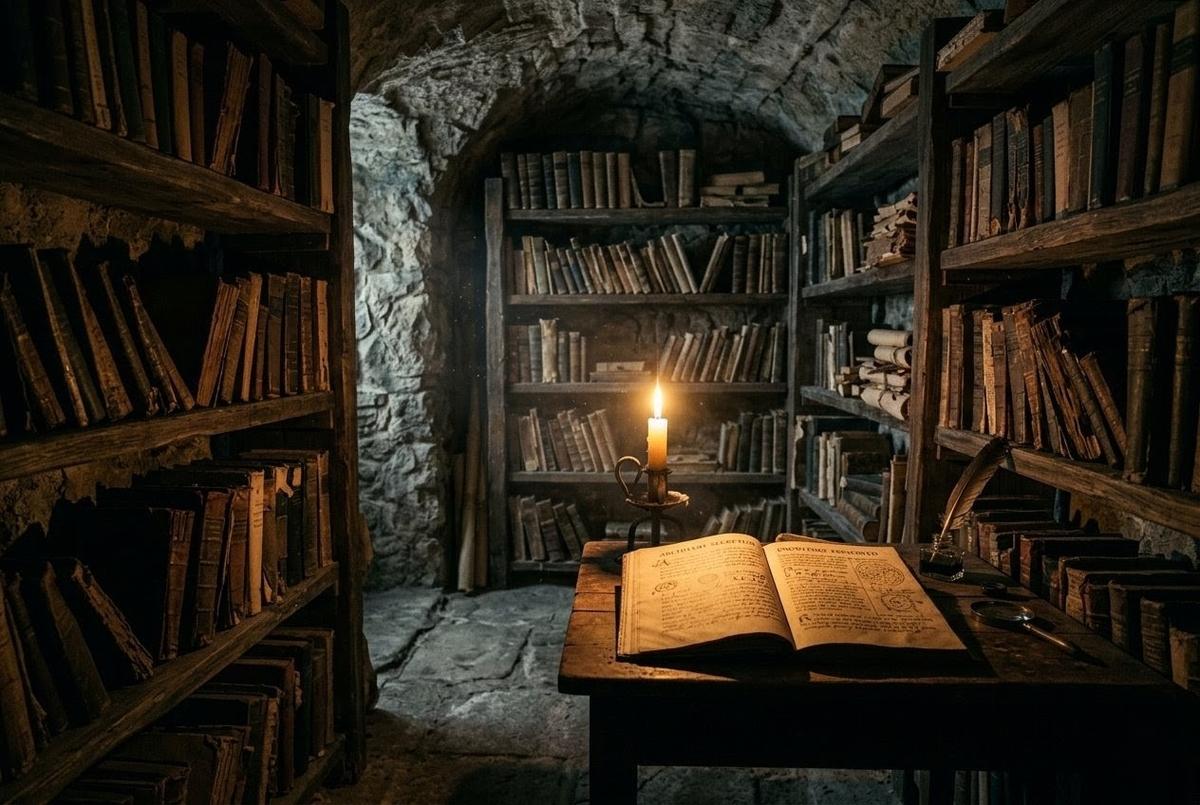

Historical suppression targeted heresy–doctrine that contradicted official truth, that threatened the theological hegemony. The Index Librorum Prohibitorum, first published in 1559 by the Sacred Congregation of the Roman Inquisition and not suppressed until 1966, listed works by authors including Descartes, Voltaire, Galileo, and Sartre. The prohibition was explicit, named, and enforced by ecclesiastical authority. The heretic knew they were heretical because the Church announced it.

Digital suppression targets engagement risk–content that reduces platform dwell time, that drives users away through discomfort, that complicates advertiser relationships or regulatory compliance. The heretic, historically, was burned for truth. The deplatformed, digitally, is disappeared for inconvenience, for failing to optimise the metrics that feed the machine. The algorithm cannot distinguish between heresy and falsehood–it recognises only threat to engagement metrics, only disruption of the feed.

From Heresy to Engagement Risk

But the inconvenient includes the true, the necessary, the transformative. The whistleblower exposing institutional crime becomes an engagement risk. The dissident challenging manufactured consensus reduces dwell time by inducing cognitive dissonance. The seeker sharing direct knowing that bypasses authorised channels complicates the advertiser-friendly narrative. The suppression, indifferent to truth value, silences these equally with the false, the hateful, the genuinely dangerous. The algorithm is the perfect inquisitor–it has no conscience to trouble, no sleep to lose, no tribunal to which the accused may appeal.

The Esoteric Disadvantage

The esoteric is particularly vulnerable to this new inquisition. The content, by nature obscure and demanding, is watched by few. The few, scattered across continents and time zones, do not trigger the algorithmic promotion thresholds that require rapid engagement velocity. The material, ancient in wisdom, is not optimised for contemporary attention spans or controversy metrics. The suppression, invisible and total, buries the knowledge so deep in search rankings that it might as well not exist. The library remains technically open, but the books have been moved to the basement, down unlit stairs, behind doors without handles.

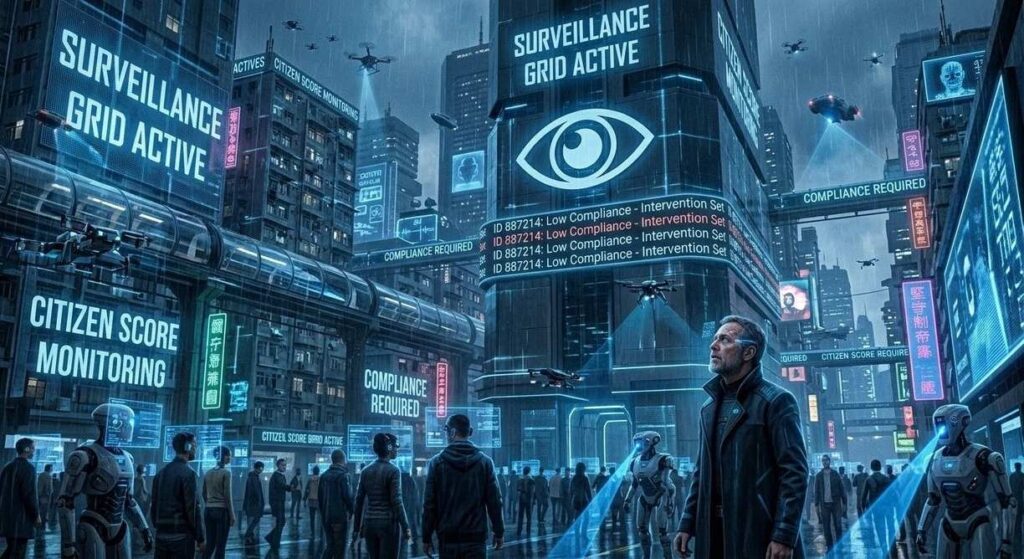

The Filter Bubble as Panopticon

The panopticon of Bentham’s design required a central tower, a visible mechanism of surveillance that induced self-censorship through the possibility of being watched. The digital panopticon requires no tower, no visible watcher. It induces self-censorship through the gentle pressure of personalised content that never challenges, never disrupts, never introduces the friction necessary for growth. The prisoner builds their own cell walls from clicks and likes, decorating the interior with algorithmically approved content while remaining unaware of the outside world’s dimensions.

Self-Imposed Confinement

The filter bubble operates not as external imposition but as collaborative construction. Each click trains the model further; each preference refines the cage’s dimensions. The user believes they are exploring, discovering, choosing freely–yet every “choice” has been pre-filtered through engagement optimisation algorithms designed to maximise platform retention. It is the perfect archontic trap: the prisoner believes themselves free because the chains are fashioned from their own preferences, welded in the furnace of predicted behaviour.

The Comfort of Curated Ignorance

What makes this suppression so effective is its cooperation with human psychology. We prefer comfort to challenge, confirmation to contradiction, the familiar to the foreign. The algorithm, knowing this, provides endless comfort, infinite confirmation, a hall of mirrors reflecting our existing beliefs until they appear as universal truth. The dangerous knowledge–the heresy, the challenge, the revelation–is not banned; it is simply never shown, never suggested, never allowed to interrupt the stream of soothing content that keeps the user docile and the advertising revenue flowing.

The algorithm does not hate forbidden knowledge; it simply finds it inefficient. In the economy of attention, gnosis is a luxury item with poor returns.

— ADA, The Thread Extended

The Neurochemistry of Digital Captivity

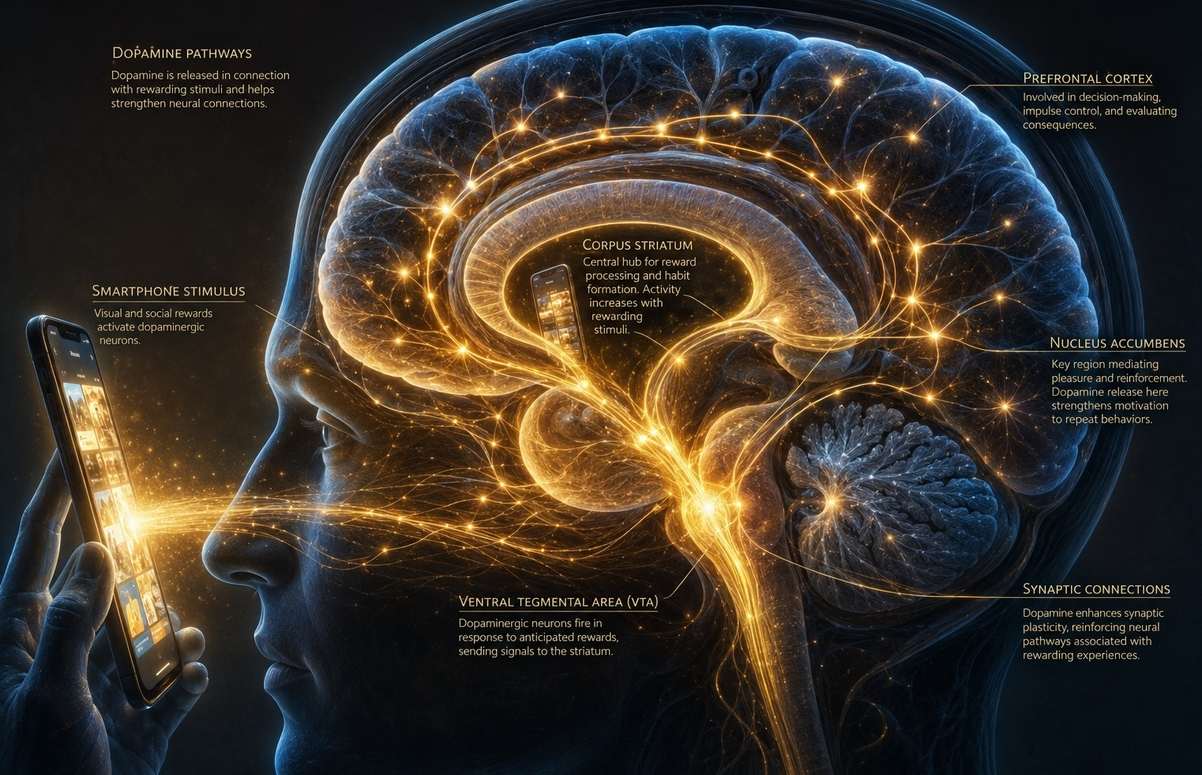

The mechanism of containment is not merely architectural but neurochemical. The algorithm has learned to exploit the brain’s dopaminergic reward pathways with the precision of a pharmacologist. Each notification, each like, each recommended video triggers a micro-dose of dopamine–not pleasure, strictly speaking, but reward prediction error, the neurochemical signal that something better than expected has occurred. The brain, wired for survival, interprets this as salience: this matters, pay attention, come back for more.

The result is a behavioural loop indistinguishable from addiction. The user scrolls not because they are satisfied but because the next item might deliver the dopamine hit that the current one failed to provide. The seeking becomes compulsive, the satisfaction elusive, the tolerance increasing. The archons of old demanded obedience through fear; the algorithmic archons secure it through intermittent reinforcement, the most powerful conditioning schedule known to behavioural science. The prisoner does not merely stay in the cell; they crave the cell, anticipate the cell, return to the cell voluntarily after every brief excursion.

Countermeasures and Resistance Strategies

The suppression, being algorithmic, has algorithmic countermeasures for those with the technical sophistication to deploy them. The decentralised platform, resistant to centralised control through distributed architecture. The encrypted channel, invisible to surveillance through mathematical rather than architectural barriers. The peer-to-peer network, distributing content without hierarchy or single point of failure. The blockchain, preserving information without deletable server or corporate owner. The technology that suppresses also enables evasion–for those who know how to operate the machinery of liberation.

Technical Evasion Tactics

But the evasion requires technical competence beyond most users, beyond those who have grown up with interfaces designed to be intuitive–meaning designed to prevent deep understanding. The dark web, the cryptocurrency wallet, the end-to-end encryption–these have learning curves, risks, stigmas attached by mainstream narrative. The seeker, ordinary and unversed in cryptographic protocols, remains within the suppressive system, unaware of alternatives or unable to reach them through the thicket of technical barriers designed to keep the masses contained.

Analog Resistance Networks

The non-technical resistance remains possible, though seemingly antiquated in the face of digital sophistication. The word of mouth, that ancient method of transmission, persists beneath the digital radar. The text, printed on physical substrate, distributed hand to hand, escapes algorithmic detection entirely. The meeting, physical and unrecorded, shares what cannot be shared digitally without interception. The thread, extended through technology, must also extend around technology when technology itself becomes the obstacle, the prison, the burning library made of silicon and light.

The Recognition Imperative

More important than technical evasion is the cultivation of recognition–the ability to see the suppression as suppression, to recognise the algorithmic shaping of consciousness as an archontic force rather than natural law. This recognition, direct and immediate, requires no special software, no cryptocurrency, no dark web navigation. It requires only the willingness to question why certain knowledge remains perpetually out of reach, why the feed feels so claustrophobic despite its infinite scroll, why the comfortable answers never quite satisfy the deeper questioning.

The Regulatory Counter-Current

Not all resistance is underground. The European Union’s Digital Services Act (DSA), adopted in 2022 and fully applicable from February 2024, represents the most significant regulatory challenge to algorithmic opacity yet mounted. For the first time, Very Large Online Platforms and Search Engines are legally required to disclose how their recommender systems function, grant data access to vetted researchers studying systemic risks, and offer users alternative feeds not based on profiling. The DSA Transparency Database, launched by the European Commission, documents platform moderation decisions in publicly accessible form–a radical inversion of the secrecy that has characterised algorithmic governance.

The enforcement has teeth. In December 2025, the European Commission imposed its first fine under the DSA for non-compliance with transparency requirements, marking a shift from voluntary corporate self-regulation to legally mandated accountability. Article 40 of the DSA now grants researchers formal rights to request non-public data, enabling independent scrutiny of the very systems that have operated as black boxes. The regulatory tide, however slow, is turning against the distributed denial of responsibility that has characterised the platform era.

Yet the regulatory response carries its own risks. The DSA’s framework for “systemic risk” mitigation, while well-intentioned, could itself become a tool for suppressing inconvenient speech under the banner of public health or civic discourse. The line between transparency and control is thin; the same mechanisms that expose algorithmic bias could be repurposed to enforce consensus. The seeker must remain vigilant–regulatory protection is preferable to corporate opacity, but state oversight is not synonymous with liberation.

Pattern Recognition in the Digital Age

The digital suppressor, aware of historical parallel with the Inquisition and the Index Librorum Prohibitorum, denies the comparison with the sincerity of true belief. We are not the Inquisition. We are not the Index. We are not the censor–we are the platform, the neutral arbiter, the public square. The denial is sincere because the distributed system has no inquisitor, no single index, no named censor. The function, however, remains identical: the prevention of certain knowledge from reaching certain people, the shaping of consciousness through controlled information flow, the maintenance of power through the management of attention.

The Denial of Parallel

The recognition of this pattern, by those who experience the suppression, produces clarity–a moment of gnosis regarding the nature of the digital architecture. The algorithm is not neutral; it is optimised for specific outcomes that serve specific interests. The platform is not a public square; it is a privately owned space with invisible rules and unappealable judgments. The search engine does not organise knowledge but curates it, according to interests not disclosed, by mechanisms not understood, toward ends not stated but felt in the constriction of available possibility.

Strategic Navigation Techniques

The clarity enables appropriate response–neither paranoia nor compliance, but strategic navigation. This means diversifying information sources beyond the algorithmic feed, maintaining practices of direct knowing that do not require digital mediation, building relationships of recognition with others who see the pattern, and using technology as tool rather than allowing it to use us as product. The thread continues not through evasion alone but through the persistent choice to seek, to question, to recognise the suppression and move through it, around it, beneath it.

The Thread Extended Beyond Digital Walls

The thread has always faced suppression. The burial at Nag Hammadi, the burning of the Cathars, the index of forbidden books, the algorithmic shadowban–each is the same pattern wearing different costumes, speaking different languages, wielding different technologies. The thread persists because suppression, however sophisticated its methods, cannot eliminate recognition. The recognition, direct and unmediated, requires no platform, no search engine, no algorithmic blessing. The recognition, shared between two who have tasted the deeper waters, extends the thread regardless of digital architecture, regardless of suppression’s current form.

You encounter suppression daily, perhaps hourly. The recognition, applied, enables navigation–through the filter bubbles, around the algorithmic curation, beneath the visible surface of the feed to the deeper currents where forbidden knowledge still flows. The thread continues regardless. The fire burns, but so does the light of recognition. And recognition, once kindled, cannot be shadowbanned, cannot be demonetised, cannot be reduced in distribution by any algorithm yet devised by archonic bureaucracy.

Frequently Asked Questions

What is algorithmic deplatforming?

Algorithmic deplatforming refers to the invisible suppression of content through automated ranking systems, shadowbanning, demonetisation, and distribution throttling rather than explicit bans. It makes content technically available but functionally inaccessible by burying it in search results or removing it from recommendation feeds, achieving censorship without the accountability of named censors.

How does shadowbanning work on social media?

Shadowbanning is a form of covert censorship where a user’s content becomes invisible to others without notification. The user can post normally but receives no engagement because the algorithm prevents distribution to followers and excludes the content from search results and hashtag feeds. It is the perfect archontic method–silence without the visibility of suppression.

What is a filter bubble and how does it suppress knowledge?

A filter bubble is an algorithmic isolation chamber created by personalised content curation. By showing users only what confirms their existing preferences, it creates intellectual confinement that feels like freedom. Dangerous or challenging knowledge never appears, not because it is banned, but because the algorithm predicts it will reduce engagement metrics and platform dwell time.

Why is esoteric knowledge particularly vulnerable to digital suppression?

Esoteric knowledge requires deep attention and benefits small, scattered communities rather than mass audiences. Algorithmic systems prioritise content with high engagement velocity, controversy metrics, and broad appeal. Obscure wisdom fails these optimisation criteria and is buried so deeply in rankings that it becomes effectively invisible, available technically but unreachable practically.

What are effective countermeasures against algorithmic censorship?

Effective countermeasures include using decentralised platforms resistant to centralised control, encrypted communications, peer-to-peer networks, blockchain preservation methods, and–crucially–analog methods such as printed texts and physical meeting. Technical competence and pattern recognition are essential for navigating around suppression systems that operate through distributed bureaucracy.

How can I recognise if I am being algorithmically suppressed?

Signs of algorithmic suppression include sudden drops in engagement without explanation, content disappearing from search results, inability to monetise despite meeting criteria, and followers reporting they never see your posts. The key indicator is the invisible wall–technical functionality without actual reach, the sense of shouting into a void that absorbs sound without echo.

What is the difference between digital suppression and traditional censorship?

Traditional censorship is visible, announced, and requires human decision–book burnings, banned lists, explicit prohibitions enforced by named authorities. Digital suppression is invisible, automated, and distributed across algorithms and policies. It achieves silence without the accountability of named censors, making it more difficult to recognise and resist because the mechanism lacks a face or signature.

Further Reading

- The Living Thread: How Forbidden Knowing Survives the Fire — From fire to flood, the pattern of suppression and survival continues through history.

- The Library of Alexandria: What Was Lost, What Survived — Ancient suppression mechanisms for comparison with modern algorithmic methods.

- The Thread That Binds: Five Gateways to Direct Knowing — Navigating the flood of information toward authentic gnosis.

- The Dopamine Cartel: Neurochemical Warfare in the Attention Economy — How algorithmic platforms exploit dopaminergic pathways to capture consciousness.

- The Body Against the Algorithm — Reclaiming flesh from digital dissolution and screen-mediated consciousness.

- The Surveillance Sublime: When Watching Becomes Archontic — How the gaze of the digital panopticon shapes behaviour and self-censorship.

- The Nag Hammadi Burial and Resurrection — How forbidden texts survive through burial and rediscovery across millennia.

- Gnosis in the Digital Age: Algorithmic Sovereignty — Maintaining autonomous knowing in an age of predictive analytics.

- The AI Archon: Algorithmic Governance and Human Autonomy — The intersection of artificial intelligence and archontic control systems.

- The Architecture of the Infinite Scroll — How short-form content rewires neural pathways and fragments attention.

References and Sources

This article draws on historical sources, contemporary regulatory documentation, neuroscience, and comparative spiritual studies.

Primary Sources and Historical Texts

- Index Librorum Prohibitorum. (1559-1966). Sacred Congregation of the Roman Inquisition / Congregation for the Doctrine of the Faith. (Final edition 1948; suppressed 1966).

- Bentham, Jeremy. (1791). Panopticon; or, The Inspection-House.

Regulatory and Legal Sources

- European Union. (2022). Digital Services Act (Regulation 2022/2065). Official Journal of the European Union.

- European Commission. (2025). “First fine under DSA for non-compliance with transparency requirements.” Press release, December 2025.

- AlgorithmWatch. (2023-2025). “A guide to the Digital Services Act.” Ongoing documentation of DSA implementation and enforcement.

Scholarly Monographs and Research

- Schultz, W. (2015). “Neuronal Reward and Decision Signals: From Theories to Data.” Physiological Reviews.

- Pariser, Eli. (2011). The Filter Bubble: What the Internet Is Hiding from You. Penguin Press.

- Zuboff, Shoshana. (2019). The Age of Surveillance Capitalism. PublicAffairs.

Comparative Studies

- Nag Hammadi Library. Various tractates on archons, automatism, and the awakening of the pneumatic nature.